Why both research and engineering?

PhilosophyMost HRI researchers use commercial robots. Most roboticists never study human interaction. I believe doing both leads to better science and better machines.

Working at the intersection gives me a larger, more holistic perspective while designing robotics. As an HRI researcher, I understand what actually matters for human-robot interaction studies: repeatability, embodiment cues, real-time responsiveness, and participant comfort. Some measures of performance can't be quantified but only found through a large sample of the human experience, which is what I have been able to do in my three years at the Link Lab.

As a roboticist, I engineer open-source, cheaper alternatives to commercial humanoid systems, designed specifically for HRI labs. The goal isn't to replicate expensive telepresence robots; it's to build tools optimized for research workflows: easy to repair, modular, well-documented, and deployable in multi-participant studies where hardware reliability matters more than polish.

This dual focus means I can run studies, identify what's broken in the hardware, and iterate using real user feedback. It's an uncommon workflow for a student, but HRI research has allowed me to gain this unique perspective. Over 7,800 minutes of user testing have been conducted across two custom-built systems, with one published paper and another in progress.

8-DOF HAND · MEDIAPIPE

8-DOF HAND · MEDIAPIPE

Engineering projects

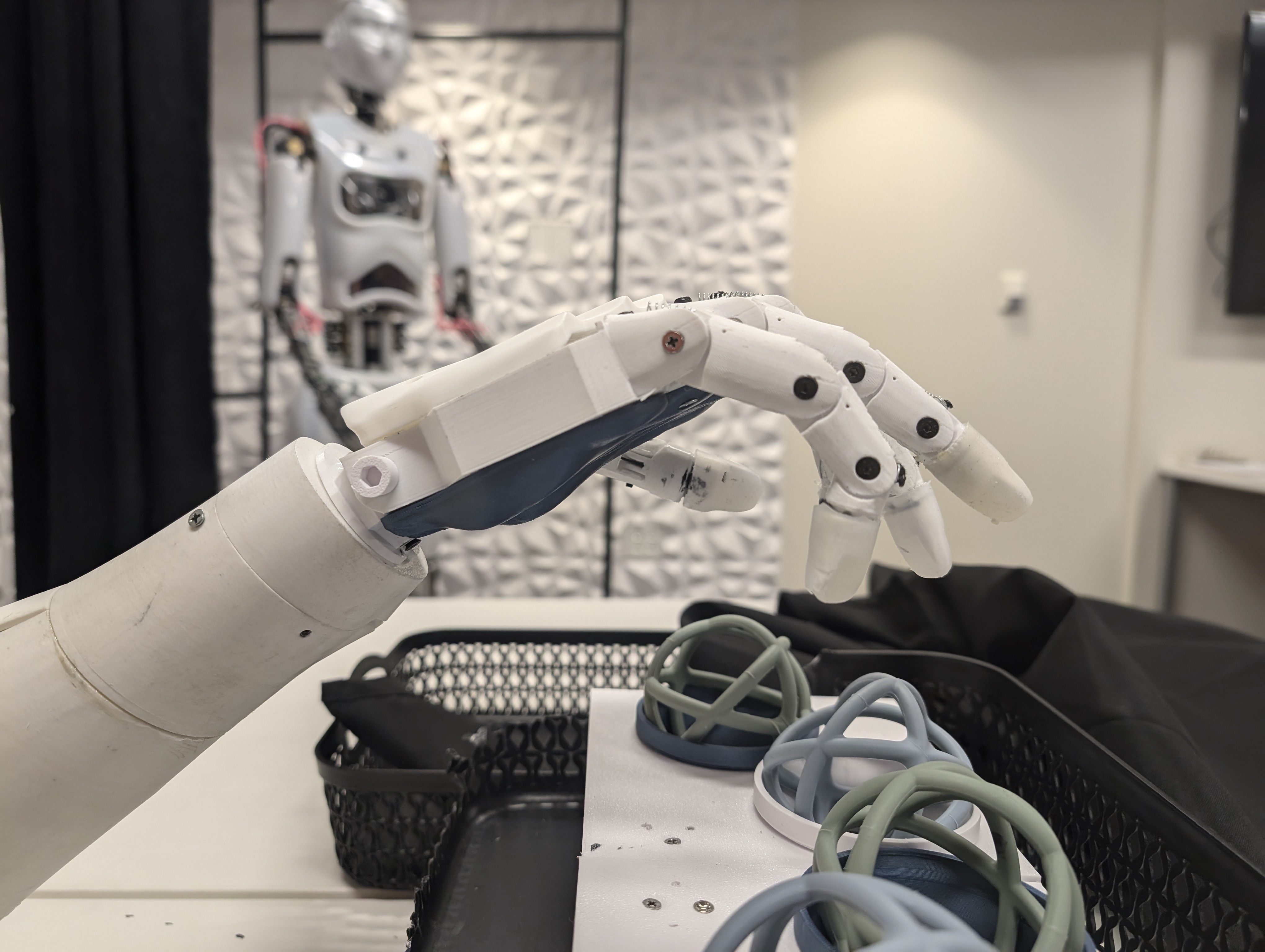

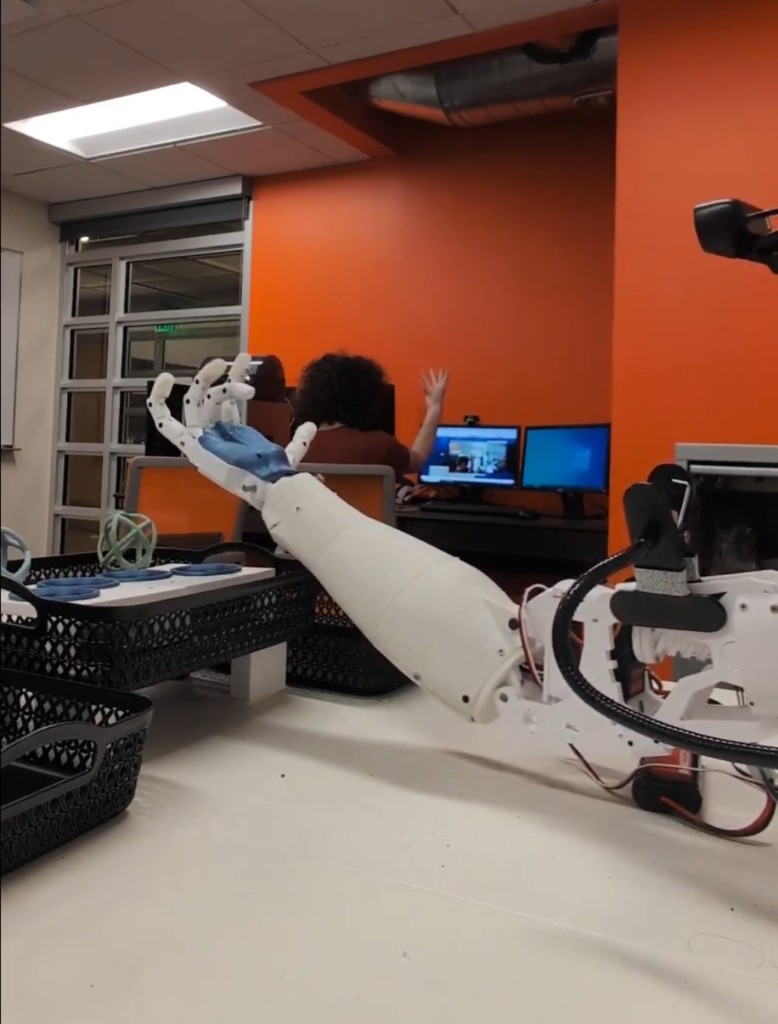

Hardware · Software · Deployment8-DoF Humanoid Hand

Real-time hand tracking teleoperation system with MediaPipe integration, deployed in a 4,500+ minute user study.

Designed and assembled an 8 degree-of-freedom humanoid hand optimized for teleoperation research. The system uses Google MediaPipe for real-time hand tracking, mapping operator hand poses to servo positions at 30+ fps with minimal latency.

The mechanical design prioritizes repairability and modularity — each finger is independently serviceable, with 3D-printed linkages and off-the-shelf servos. The embedded control runs on a Raspberry Pi with a custom Python pipeline handling pose estimation, inverse kinematics, and servo control over serial.

Deployed in a multi-week study exploring operator embodiment during teleoperation tasks. Survived 4,500+ minutes of continuous user testing across diverse participants with minimal hardware failures.

HAND SYSTEM

HAND SYSTEM

IN ACTION

IN ACTION

MEDIAPIPE OVERLAY

MEDIAPIPE OVERLAY

HEAD SYSTEM · 16-DOF

HEAD SYSTEM · 16-DOF

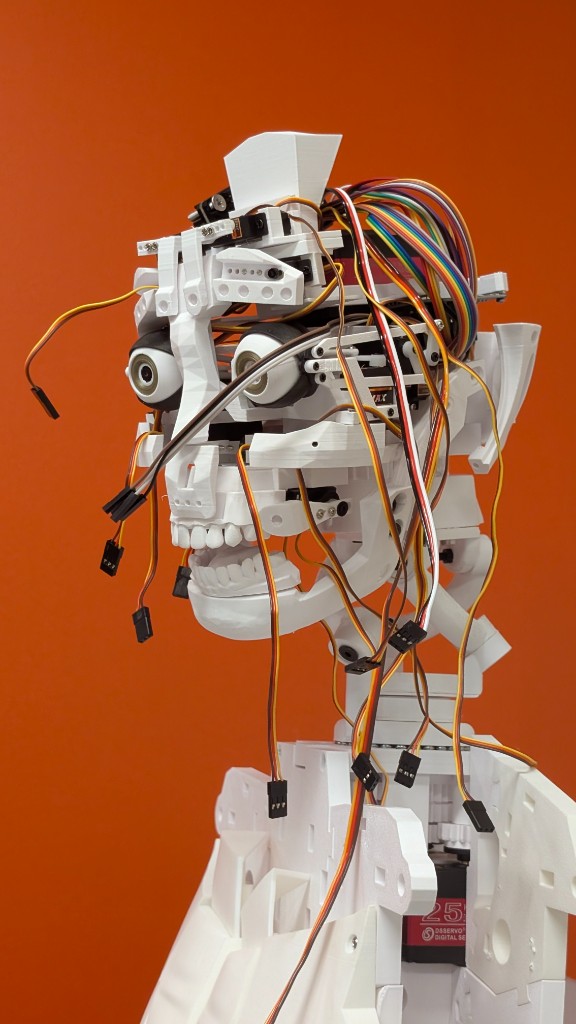

16-DoF Humanoid Robotic Head

Soft-face humanoid head with real-time facial expression teleoperation using FPENet, deployed in a 3,300+ minute facial mimicry study.

A 16 degree-of-freedom humanoid head with a soft silicone face, designed for researching facial mimicry and presence in social teleoperation. The system runs a custom implementation of FPENet (Facial Point Expression Network) on an NVIDIA Jetson, achieving real-time facial tracking and expression transfer.

The mechanical design uses a combination of servo-driven linkages beneath a compliant silicone skin, allowing for naturalistic facial expressions. The control architecture maps detected facial landmarks to servo positions using a learned inverse kinematics model, trained on thousands of expression samples.

Every aspect — mechanical design, embedded software, computer vision pipeline, and facial actuation mapping — was designed, assembled, and coded by me. The system was deployed in a 3,300+ minute user study exploring how facial teleoperation affects operator presence and participant perception in social HRI scenarios.

Combined user testing across both systems with 250+ participants in controlled HRI studies

8-DoF hand + 16-DoF head, enabling rich embodied teleoperation experiences

Designed, assembled, and coded entirely by me — from CAD to control loops to computer vision

HRI research contributions

Publications · Ongoing workPublished: TARX Scale Validation

Co-authored paper validating the TARX (Telepresence Affective Robot eXperience) scale for measuring affective robot-operator relationships.

This research used the 16-DoF humanoid robotic head to validate TARX, a new psychometric scale for measuring the affective quality of robot-operator relationships in telepresence scenarios. The study deployed the facial teleoperation system with participants to examine how embodied control influences operator attachment and perceived social presence.

The paper was published at HICSS 2025 and contributes foundational measurement tools for the HRI research community. The humanoid head's capability for real-time facial expression mirroring was critical to creating sufficiently rich social interaction conditions for scale validation.

Affective Robot-Operator

Relationships

on System Sciences

in Social Teleoperation

for publication

Ongoing: Facial Mimicry Study

Investigating how real-time facial expression teleoperation affects operator presence and participant perception in social HRI scenarios.

This study explores whether facial mimicry in teleoperated humanoid systems enhances social presence and improves interaction quality in human-robot conversations. Using the 16-DoF head with FPENet facial tracking, participants engaged in structured social tasks while the system mirrored operator facial expressions in real time.

The research investigates three conditions: full facial teleoperation, static neutral expression, and no face. Preliminary findings suggest that real-time facial mimicry significantly increases perceived presence and social engagement, but with interesting nuances around uncanny valley effects and operator cognitive load.

This work is currently being written into a paper for submission to a major HRI conference. The 3,300+ minutes of user testing data provided rich quantitative and qualitative insights into the role of facial expression in telepresence robotics.